- Nearly a thousand Google and OpenAI employees signed an open letter calling for clear limits on military uses of AI

- The letter urges technology companies to reject government plans for surveillance using artificial intelligence and autonomous weapons.

- The move reflects growing tension within the AI industry over government contracts and defense partnerships.

Nearly a thousand Google and OpenAI employees signed an open letter urging their companies to resist pressure from the US military to relax restrictions on how artificial intelligence systems can be used. The letter states “We will not be divided” on the issue, even after the Pentagon designated Anthropic as a “supply chain risk after the company refused to allow its technology to be used for domestic mass surveillance or fully autonomous weapons.”

That move surprised many observers in Silicon Valley and sparked a wave of concern among engineers who build today’s cutting-edge AI models. Especially since OpenAI and Google are reportedly negotiating to accept the deal rejected by Anthropic.

The signatories couch their message in unusually forceful language for an industry known for its cautious corporate communication. The letter alleges that government officials are trying to pressure AI companies to abandon certain ethical boundaries.

“They are trying to divide each company for fear that the other will give in. That strategy only works if none of us know where the others stand,” the letter states. “This letter serves to create shared understanding and solidarity in the face of this pressure from the War Department.”

The open letter is notable because it includes people from rival companies that typically compete fiercely. The argument they make is that AI is now powerful enough that decisions about its use cannot be treated as routine business agreements.

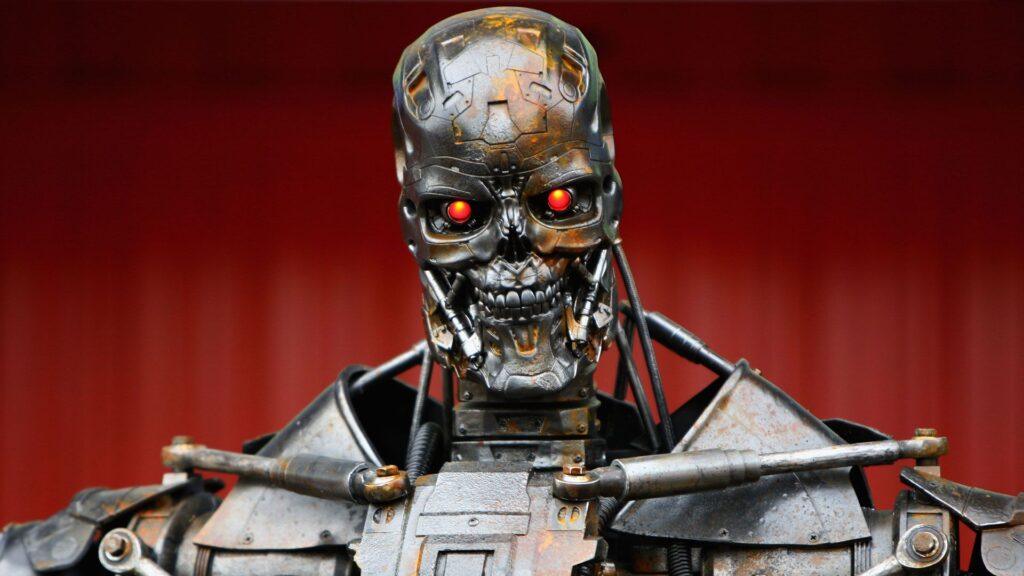

These concerns are not purely theoretical. Governments around the world are exploring how AI could be integrated into defense planning and intelligence analysis. Military agencies have long used software tools for surveillance and targeting. Advanced generative models could dramatically accelerate those capabilities. And when studies start to show that AI prefers the nuclear option in war games, letting it control weapons and surveillance systems seems like an even worse idea.

AI war

It’s a setback for Google workers, thousands of whom protested the company’s involvement in the Pentagon’s Project Maven plan to use machine learning to analyze drone footage in 2018. After widespread internal backlash, Google eventually allowed that contract to expire and published a set of ethical guidelines known as its AI Principles.

Those principles were intended to define how Google would approach sensitive uses of artificial intelligence. At the time, the company said it would not develop technologies designed to cause harm or enable surveillance that violated international standards. The latest open letter suggests that similar tensions are resurfacing as governments become more interested in implementing powerful language models.

The letter may or may not change corporate decisions, but at least workers can point to it as a message that cannot be misinterpreted.

Follow TechRadar on Google News and add us as a preferred source to receive news, reviews and opinions from our experts in your feeds. Be sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form and receive regular updates from us on WhatsApp also.

The best business laptops for every budget