- AI tools can convert scanned handwriting into installable TrueType font files

- Clear writing improves accuracy of AI-generated digital fonts

- Messy typing can confuse character detection during automatic font creation

Artificial intelligence systems are gradually being incorporated into creative tasks that previously required specialized software; A new example shows the ability to transform handwritten characters into a digital typeface.

When a user writes the alphabet, numbers and punctuation on paper, scans the page and loads it into an AI assistant, the system converts the shapes into a TrueType font file.

The font produced depends on the user’s handwriting, meaning that people with naturally legible handwriting are likely to achieve better results than those with unclear or inconsistent letterforms.

Article continues below.

From handwritten characters to digital typography

The process gained attention after software engineer and artificial intelligence specialist Ashe Magalhaes showed how Anthropic’s latest models could generate a working font directly from a handwritten sample.

The approach relies on the capabilities of the company’s Claude AI assistant, which can call external Python tools to complete more complex tasks.

The basic method requires writing characters A to Z, A to Z, numbers, and punctuation on a piece of paper.

The image is then scanned and uploaded. The AI analyzes the outlines of each letter, traces their outlines, and converts them into vector shapes that form the basis of a font file.

During testing, the AI tool first provided a template designed to arrange characters neatly on the page.

The instructions emphasized clear writing, consistent spacing, and a correctly scanned image without shadows or uneven lighting.

Clean outlines make it easier for the system to detect and separate individual characters before assembling them into a digital typeface.

Once the page loaded, the system attempted to process the image through Python source libraries.

Initial results were imperfect because the first resulting file distorted shapes that looked like inkblots rather than recognizable letters.

After reviewing the problem, the system concluded that it had failed to detect the outer outlines of several characters and restarted the conversion process.

Further attempts improved the result and the second file produced quite legible letters.

However, characters containing internal spaces, such as O, A, or R, initially appeared as solid shapes without openings. Additional processing corrected those shapes and produced a more usable font.

There were some issues in later testing and in one case the letters “x” and “y” were merged into a single glyph, requiring additional adjustments before the final version worked correctly.

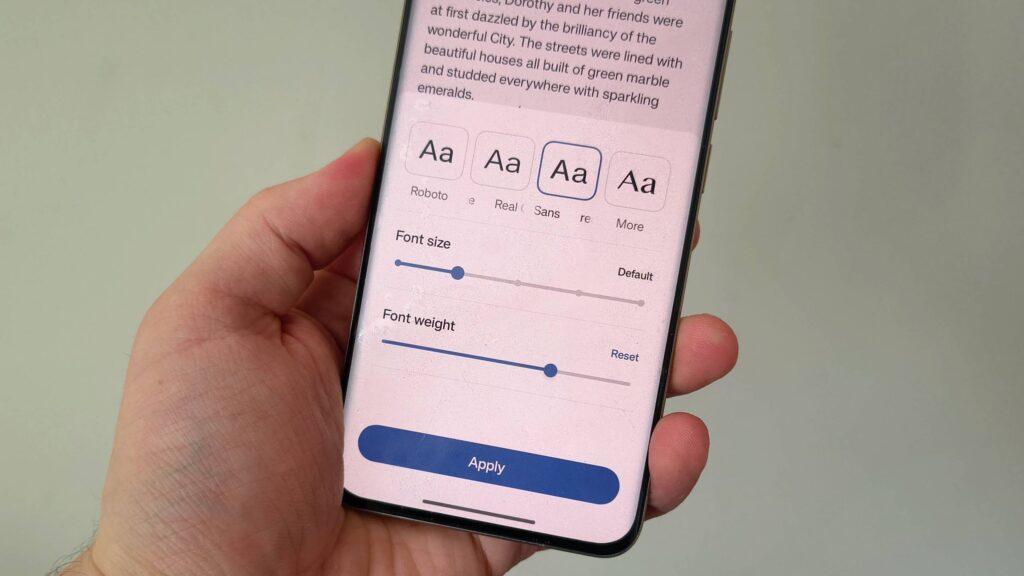

Previous methods required dedicated software such as Calligraphr, HandFonted or FontForge to perform the same task with greater control. This new workflow reduces the process to a brief exchange with an artificial intelligence system.

It remains uncertain whether this approach will consistently produce reliable sources, although it shows how generative models are gradually entering small creative workflows.

Follow TechRadar on Google News and add us as a preferred source to receive news, reviews and opinions from our experts in your feeds. Be sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form and receive regular updates from us on WhatsApp also.