- Nvidia CEO Jensen Huang predicts great times ahead

- Huang says he expects about $1 billion from Rubin and Blackwell sales

- Nvidia unveils new Vera chips and server racks at GTC 2026

Jensen Huang has stated that he expects Nvidia to make around $1 billion from the sale of its AI hardware through 2027.

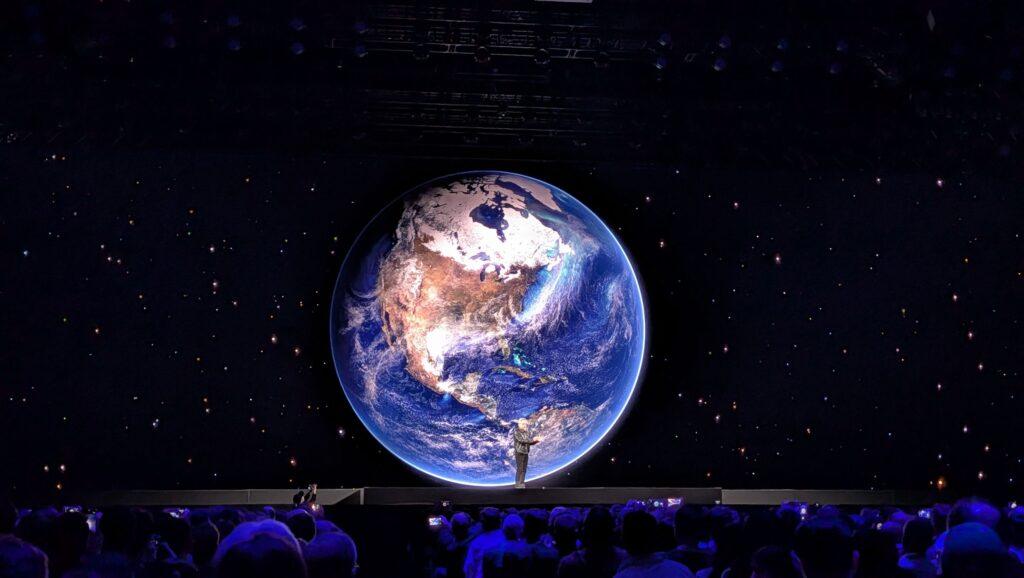

In his keynote speech at Nvidia GTC 2026, the CEO and co-founder said sales of its Blackwell and Rubin chips will be a big source of revenue for the company in the coming months.

And this may not be all, as Nvidia announced a series of new hardware launches, further expanding its range of offerings.

Article continues below.

Everything in computing

“I see (AI chip sales) until 2027: at least $1 trillion,” Huang declared in a presentation packed with announcements, but focusing on meeting the growing demand for computing in the AI era.

“I think the demand for computing has increased a million-fold in the last two years,” Huang said. “It’s the feeling we all have. It’s the feeling every startup has.”

The $1 trillion figure raised eyebrows among thousands of attendees at Nvidia GTC 2026, especially since Huang noted that the company had previously forecast that data center equipment would generate $500 billion in sales through the end of 2026.

To maintain this momentum, Huang had shown several major announcements on stage, including no less than seven new Vera Rubin chips.

These include a new Vera CPU, available in the second half of 2026, which the company says is “purpose-built” for agent AI, offering twice the efficiency and 50% faster than traditional CPUs, along with the highest single-threaded performance and bandwidth per core today.

Nvidia also announced a new chassis that integrates 256 liquid-cooled Vera CPUs, enough to support more than 22,500 simultaneous CPU environments, each operating independently at full performance, a key part of the company’s push toward “AI factories” to power use cases ranging from quantum computing to robotics.

Huang also revealed that the Groq 3 LPU (language processing unit) will now be part of Nvidia’s product line, helping to drive large language model (LLM) inference and improving how responses to AI prompts are generated.

Follow TechRadar on Google News and add us as a preferred source to receive news, reviews and opinions from our experts in your feeds. Be sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form and receive regular updates from us on WhatsApp also.