- Floating Data Centers Aim to Avoid Network Bottlenecks by Deploying Offshore

- Samsung model plugs directly into coastal power for faster AI scaling

- Offshore barges could significantly reduce data center deployment timelines

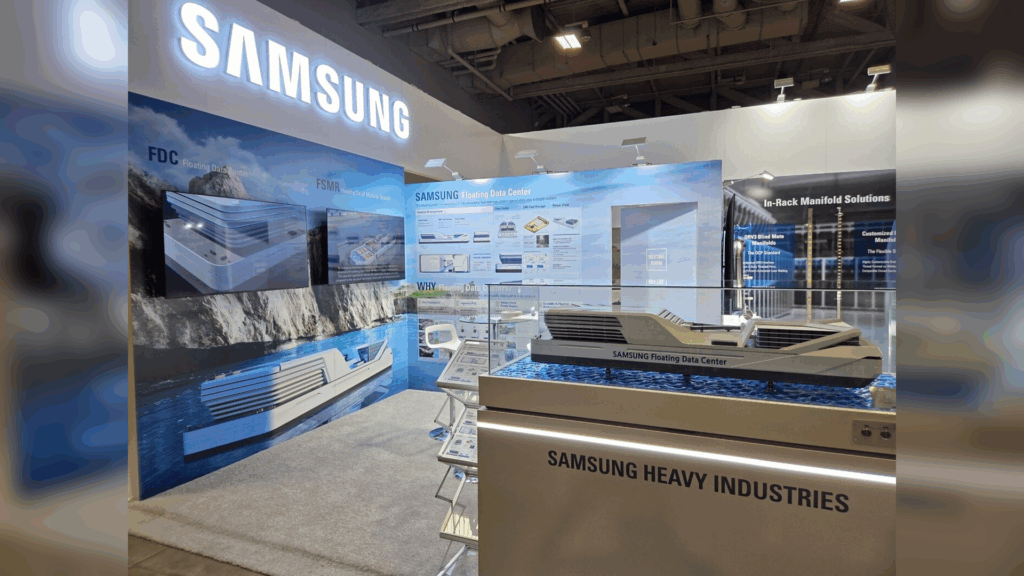

Samsung Heavy Industries has unveiled a large floating data center ship model that could support artificial intelligence tools globally.

Samsung and OpenAI signed a letter of intent in October 2025 for a comprehensive partnership, including the development of a floating data center.

The design of this data center is specifically intended to host future versions of systems like OpenAI’s ChatGPT on an aquatic platform.

Article continues below.

Offshore Deployment Strategy for AI Infrastructure

This vessel would be located offshore and directly connected to power and cooling near coastal energy assets.

Samsung says the concept compresses the typical years-long construction of terrestrial data centers into a much shorter timeline.

The entire project is also combined with a Dallas-based infrastructure developer, Mousterian Corp., focused on high-density AI computing.

The floating model aims to shorten the time needed to secure power and cooling for AI workloads.

Instead of waiting for new connections to the grid, the system is docked near existing thermal or nuclear plants.

This approach treats the coast as a digital infrastructure deployment zone, and the barges carry fully liquid-cooled data rooms that can scale based on demand.

The developers argue that “speed to power is the new moat” for AI tools and cloud operators.

Anyone who can turn on the computing and power quickly gains a real advantage over their slower rivals.

That’s why the association says this strategy can shift capacity delivery from years to quarters for some sites.

The Dallas-based partner says the floating data center initiative aims to deliver more than 1.5 GW of capacity in about three years.

“Speed to power is the new moat. We have carefully partnered with some of the leading global conglomerates, which will allow us to deliver over 1,500 MW of capacity over the next 3 years,” said Min Suh, CEO of Mousterian Corp.

However, this figure means there will be multiple barge-based projects, each tied to local grid and energy constraints.

Each ship would house thousands of servers designed for AI training and inference loads.

The 1.5GW target also depends on approvals, construction speed and availability of water adjacent to baseload plants.

Some analysts doubt that pace can be sustained in practice, and maritime data centers still face large-scale technical, regulatory and economic hurdles.

Operational risks and uncertainties at sea

Although floating data centers address some of the problems associated with terrestrial data centers, they also introduce new challenges.

Experts are concerned that these facilities create new risks in cybersecurity, physical access and long-term reliability.

Saltwater environments, storm exposure, and emergency response times significantly complicate operations.

Maintenance and fiber optic links also become more complex offshore than on land.

Furthermore, claims of delivering 1.5 GW in 36 months are based on unproven timelines for shipbuilding, permitting and tenant onboarding.

Market demand for AI and data center tools is real, but their execution remains uncertain.

The model may add a niche option rather than overhauling how most of the AI computing is hosted, and the real test will be how many barges actually connect as planned.

Through Dallas innovates

Follow TechRadar on Google News and add us as a preferred source to receive news, reviews and opinions from our experts in your feeds.