- Google presents next generation TPU: it is divided into two series, 8t and 8i

- The 8t superpods can deliver 121 ExaFlops, up from 42.5 last year

- 8i offers 3 times more SRAM and higher HBM

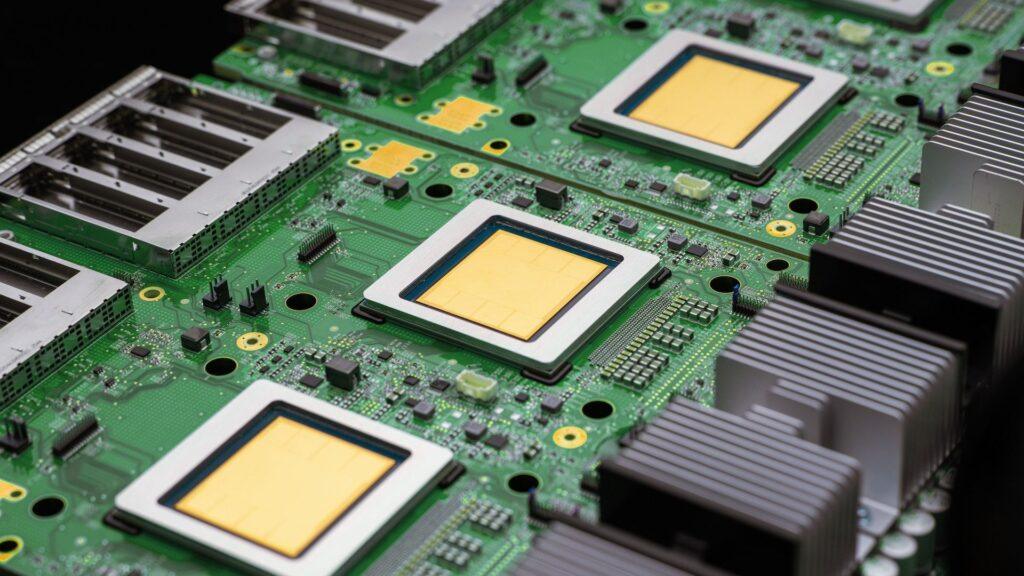

Google Cloud has announced its 8th generation Tensor Processing Units (TPUs) designed specifically for the agentic shift we are seeing within AI right now.

Revealed at Google Cloud Next 2026, the updates focus on longer context windows, multi-step reasoning, and responsiveness at scale, so its cloud infrastructure is being rebuilt to support persistent memory, continuous inference, and multi-model workloads.

This year, we’re seeing two different TPUs designed to support massive HBM scaling, with Google Cloud putting an emphasis on both memory bandwidth and compute.

Article continues below.

TPU 8t and 8i aim to train billions of parameters on groups of millions of chips

The first of two TPUs, 8t, has been optimized to be distributed across large clusters to train basic models. With an 80% year-over-year improvement in performance per dollar, the company says it will train billion-parameter models more efficiently.

Google Cloud explained that a single TPU 8t superpod can scale up to 9,600 chips, delivering 2PB of shared HBM and 121 ExaFlops of compute. For comparison, last year Ironwood got up to 9,216 chips in a superpod and 42.5 ExaFlops.

Google Cloud also warned about “the wall of latency” we face in an era of always-on agents, hence the launch of 8i, a second chip that serves as an inference and post-training engine.

TPU 8i sees a roughly 3x increase in on-chip SRAM to 384MB, as well as HBM’s 288GB, with module size now up to 1,152 chips from 256, delivering 11.6 ExaFlops of performance (up from 1.2 ExaFlops).

In terms of energy and thermal efficiency, Google Cloud has up to 2 times better performance per watt than Ironwood, its predecessor.

“Us[‘ve] “We innovated in hardware and software to enable our data centers to deliver six times more computing power per unit of electricity than just five years ago,” explained Senior Vice President and Chief Technologist of AI and Infrastructure, Amin Vahdat.

General availability for Google Cloud customers is expected in the coming months, and naturally the TPU 8t and TPU 8i will be at the forefront of the latest Gemini models.

The company also sees eighth-generation hardware playing a role in developing next-frontier models by distributing training beyond a single superpod using Pathways and JAX to unlock scaling beyond one million TPU chips per training pod, something executives confirmed at the event is currently completely theoretical (but technically possible), and TPUs are not yet available at such a scale.

Follow TechRadar on Google News and add us as a preferred source to receive news, reviews and opinions from our experts in your feeds. Be sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form and receive regular updates from us on WhatsApp also.