- Researchers have created a NAND-DRAM hybrid, inspired by legacy camera technology

- Indium gallium zinc oxide also promises benefits over silicon

- For now, this is just a prototype that needs more work.

Belgian semiconductor research center imec has unveiled what it claims to be the first 3D implementation of charge-coupled device (CCD) memory architecture, reviving the technology we’ve seen used before in digital cameras and camcorders, but for an entirely different purpose.

Using the 3D CCD architecture, researchers were able to break one of the biggest bottlenecks in AI computing today: the memory wall, where GPUs and accelerators spend more time waiting for data than processing it as a result of poor memory bandwidth and power efficiency.

The new design combines the speed and rewritability of DRAM with the density and efficiency of NAND to form a type of hybrid.

Old camera technology could lead to future generations of memory

CCD technology is nothing new: charge-coupled devices have long been used in digital cameras, video transmission equipment, scientific imaging, and even astronomical sensors, but CCDs have since been replaced by CMOS image sensors.

Traditionally, CCDs work by physically moving electrical charges between semiconductor gates, and this same principle is applied to imec research to enable highly efficient memory movement.

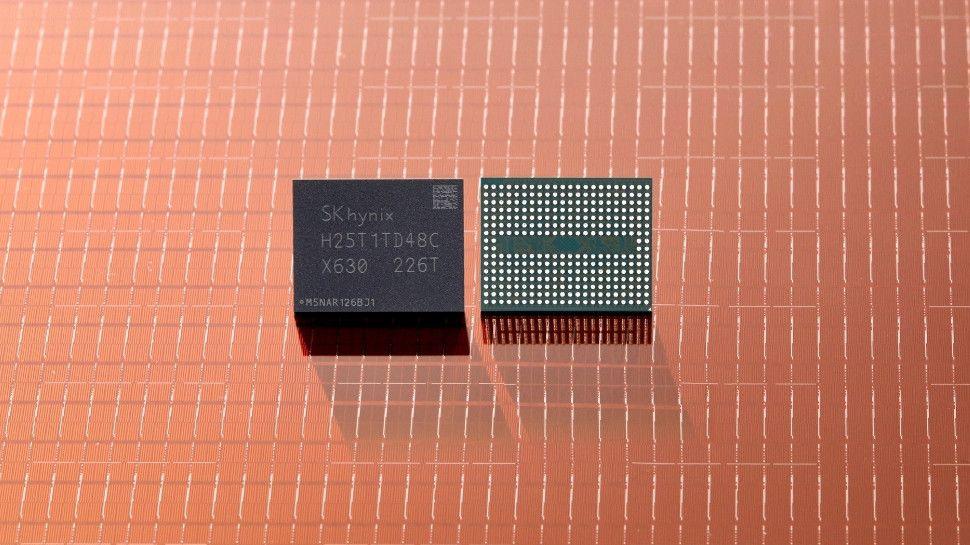

Instead of arranging the memory cells next to each other in a plane, like conventional DRAM, the design stacks them vertically in a sense similar to 3D NAND, and this is important because the limitations of DRAM include leakage, higher manufacturing costs, and a reduction in how quickly density improvements occur.

The chips also replace silicon with IGZO (indium gallium zinc oxide), which promises lower leakage, higher data retention, easier low-temperature processing, and strong support for dense 3D stacking.

With this hybrid architecture, imec has already demonstrated successful charge transfer at transfer rates of over 4MHz, but this is still a very early stage technology and the prototype only uses a small number of stacked layers. In theory, it should be able to scale as well as NAND, and commercial chips now exceed 200 layers.

The CCD architecture appears to promise reduced wear mechanisms and endurance that could even surpass NAND, making it ideal for highly intensive applications in AI training clusters and inference servers.

“Unlike byte-addressable DRAM, our 3D CCD device is designed to provide block-level data access, which is better suited for modern AI workloads,” added Storage Memory Program Director Maarten Rosmeulen.

“The potential for this CCD device to be used as a buffer lies in its ability to be integrated into a 3D NAND Flash string architecture – the most cost-effective way to achieve high, scalable bit density that is estimated to go well beyond the limit of DRAM.”

The research also details future plans for the promising architecture, positioning it as a CXL Type-3 device, or one that meets industry standards for connecting GPUs, CPUs, and accelerators. This is an important consideration as hyperscalers now turn to CXL as AI models become too large for local GPUs alone.

As a prototype and research product, there are still many hurdles to overcome, including thermal behavior, layer count scaling, and of course real-world integration; However, if successful, the new hybrid architecture could seriously help reduce one of the biggest costs in AI infrastructure, DRAM.

Looking ahead, imec proposes that the next phase may involve an entirely new class of memory architecture rather than simply continuing to evolve existing designs.

Follow TechRadar on Google News and add us as a preferred source to receive news, reviews and opinions from our experts in your feeds.